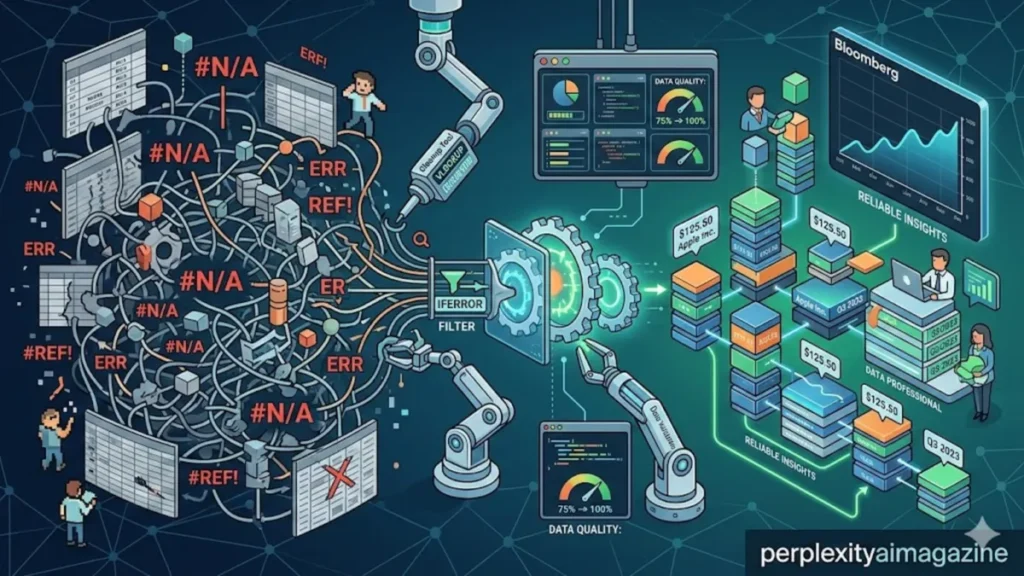

I have seen spreadsheets break under the weight of imperfect data, and nowhere is that more common than in Bloomberg Excel exports. Within the first few seconds of opening a BDH or BDP dataset, analysts are confronted with a familiar problem: columns riddled with “N/A” values, #N/A errors, blanks, and inconsistencies that threaten the integrity of any downstream analysis. The challenge is not simply cosmetic. It is structural, affecting models, dashboards, and ultimately financial decisions. – n an a.

At its core, filtering out “N/A” values in Excel is about restoring trust in data. The Advanced Filter feature, often overlooked in favor of simpler tools, provides a powerful method to exclude invalid entries while preserving the original dataset. Yet it is only one part of a broader ecosystem of techniques that include formula-based error handling, Power Query transformations, and even external scripting through Python.

Across trading desks, research teams, and asset management firms, these methods are not optional conveniences. They are operational necessities. Bloomberg’s BDH function, for instance, frequently returns #N/A when securities lack historical data for specific dates. Without proper handling, these errors cascade into inaccurate averages, broken charts, and misleading insights.

This article explores how professionals clean Bloomberg data at scale, why Advanced Filter still matters in a modern Excel environment, and how newer tools are reshaping workflows. From criteria ranges to automated pipelines, the story is ultimately about discipline in data handling and the quiet craftsmanship behind every clean spreadsheet.

The Persistent Problem of Bloomberg #N/A Data

Bloomberg’s Excel integration has long been a cornerstone of financial analysis, offering direct access to market data through functions like BDH (Bloomberg Data History) and BDP (Bloomberg Data Point). Yet these functions frequently produce incomplete datasets.

The reasons are structural. Markets do not trade every day. Securities may lack historical records. Corporate actions can disrupt continuity. As a result, Excel outputs often include #N/A errors or text placeholders such as “N/A.”

For analysts, the issue is not merely aesthetic. A single unhandled error can invalidate formulas across an entire workbook. In large datasets, thousands of such entries can accumulate.

“Data quality is not a downstream problem; it’s a foundational one,” says Thomas H. Davenport, a leading authority on analytics. “If your inputs are compromised, your outputs will be misleading, no matter how sophisticated your model is.” – n an a.

The implications extend beyond spreadsheets. Portfolio managers rely on clean time series for risk modeling. Quantitative analysts depend on uninterrupted datasets for algorithmic strategies. Even minor inconsistencies can distort volatility calculations or trend analyses.

In this environment, cleaning Bloomberg data becomes a critical skill, blending technical knowledge with methodical precision.

Advanced Filter: A Quiet Workhorse

Excel’s Advanced Filter is often overshadowed by simpler tools like AutoFilter, yet it remains one of the most precise methods for isolating valid data.

The process begins with a criteria range. Analysts insert blank rows above their dataset, replicate column headers, and define conditions such as <>N/A. This tells Excel to exclude any entries equal to “N/A.”

What makes Advanced Filter powerful is its logic structure. Conditions placed on the same row apply AND logic, ensuring that only rows meeting all criteria are retained. Separate rows introduce OR logic, allowing for more nuanced filtering.

Unlike standard filters, Advanced Filter can copy results to a new location, preserving the original dataset. This non-destructive approach is essential in professional workflows where auditability matters.

Example Criteria Setup

| Ticker | Price | Volume |

|---|---|---|

| <>N/A | <>N/A | <>N/A |

When applied, this setup ensures that only rows with valid entries across all three columns are extracted.

“Advanced Filter is one of Excel’s most underutilized features,” notes Bill Jelen, Excel expert and author. “It’s faster and more flexible than most people realize, especially for large datasets.”

Despite its power, Advanced Filter has limitations. It struggles with true Excel errors like #N/A, which require additional handling through formulas or helper columns.

Handling #N/A Errors with Formulas

Where Advanced Filter falls short, formulas step in. Excel provides several built-in functions designed to manage errors efficiently.

The most widely used is IFERROR, which replaces any error with a specified value. For Bloomberg data, this often means converting #N/A into blanks.

For example:

=IFERROR(BDH(...),"")

This approach prevents errors from propagating through calculations while keeping datasets visually clean.

Another method uses ISNA for more targeted control:

=IF(ISNA(BDH(...)),0,BDH(...))

This allows analysts to replace errors with zeros or other meaningful values, depending on the context.

Comparison of Formula Techniques

| Method | Use Case | Output Handling |

|---|---|---|

| IFERROR | General error replacement | Blank, zero, or custom |

| ISNA | Specific #N/A detection | Conditional logic |

| FILTER | Dynamic array filtering (Excel 365) | Clean dataset output |

These formulas are particularly valuable in large datasets, where manual filtering becomes impractical.

“Automation in Excel is not about complexity; it’s about consistency,” says Felienne Hermans, professor of computer science and spreadsheet research. “Functions like IFERROR reduce human error and improve reproducibility.”

Power Query: The Modern Transformation Engine

As datasets grow larger and workflows become more automated, Power Query has emerged as a preferred solution for cleaning Bloomberg data.

Integrated into Excel as “Get & Transform,” Power Query allows users to import, clean, and reshape data without altering the original source. When Bloomberg data is loaded into Power Query, #N/A errors are automatically converted into null values.

From there, analysts can apply a series of transformations:

- Replace errors with nulls or zeros

- Remove blank rows

- Fill missing values using “Fill Down”

- Filter out invalid entries

The real advantage lies in repeatability. Once a transformation pipeline is created, it can be refreshed automatically whenever new data is imported.

“Power Query changes the paradigm from manual cleaning to automated transformation,” explains Microsoft documentation. “It enables users to build reusable data preparation workflows.”

This capability is especially valuable in environments where Bloomberg data is refreshed daily or even intraday.

Minimizing Errors at the Source

While cleaning techniques are essential, reducing errors at the source can significantly improve efficiency.

Bloomberg’s BDH function includes parameters designed to minimize #N/A outputs:

Fill=P(Fill Previous): Propagates the last valid valueDays=T(Trading Days): Excludes non-trading daysNT=Fill: Handles weekends and holidays

For example:

=BDH("IBM US Equity","PX_LAST","01/01/2025","03/19/2026","Days=T","Fill=P")

This configuration reduces gaps in time series data, making subsequent cleaning easier.

BDH Parameter Effects

| Parameter | Function | Impact on Data Quality |

|---|---|---|

| Fill=P | Forward fills missing values | Reduces gaps |

| Days=T | Limits to trading days | Removes irrelevant rows |

| NT=Fill | Handles non-trading days | Improves continuity |

By combining these parameters with downstream cleaning, analysts can achieve near-complete datasets.

Beyond Excel: Automation and Scripting

For large-scale operations, Excel alone may not be sufficient. Many professionals turn to scripting languages like Python for more advanced data processing.

Using libraries such as pandas, analysts can handle millions of rows efficiently:

df = pd.read_csv('bloomberg.csv')

df.dropna(inplace=True)

df.fillna(method='ffill', inplace=True)

df.to_excel('cleaned.xlsx')

This approach offers several advantages:

- Speed and scalability

- Integration with APIs

- Advanced statistical processing

VBA macros provide another layer of automation within Excel itself. A simple macro can remove error cells or delete rows with blanks in seconds.

Yet these tools come with trade-offs. They require technical expertise and may introduce complexity into workflows that must remain accessible to non-programmers.

The Human Element in Data Cleaning

Despite the growing sophistication of tools, data cleaning remains as much an art as a science. Analysts must make judgment calls about how to handle missing values, whether to fill gaps or exclude data, and how to balance completeness with accuracy.

“Cleaning data is not just a technical task; it’s a conceptual one,” says Hadley Wickham, a leading data scientist. “You’re making decisions about what the data should represent.”

In financial contexts, these decisions carry significant weight. Filling missing prices with previous values may be appropriate for certain analyses but misleading for others.

The process requires domain knowledge, attention to detail, and an understanding of the underlying data.

Toward Cleaner, Smarter Workflows

The evolution of Excel tools reflects a broader shift in how organizations approach data. What was once a manual, error-prone process is becoming increasingly automated and standardized.

Advanced Filter still has its place, offering precision and control. Formulas provide flexibility and scalability. Power Query introduces repeatability and efficiency. External tools extend capabilities beyond Excel’s limits.

Together, these methods form a toolkit that allows analysts to transform messy Bloomberg outputs into reliable datasets.

The challenge is not choosing one method over another, but understanding how they complement each other.

Takeaways

- Bloomberg Excel data frequently contains #N/A errors due to missing or non-trading data.

- Advanced Filter offers precise, non-destructive filtering using criteria ranges.

- IFERROR and ISNA formulas provide efficient error handling within large datasets.

- Power Query enables automated, repeatable data cleaning workflows.

- BDH parameters like Fill=P and Days=T reduce errors at the source.

- Python and VBA extend capabilities for large-scale or automated processing.

- Data cleaning decisions require both technical skill and contextual judgment.

Conclusion

I often think of data cleaning as the invisible foundation of modern analysis. It rarely receives attention, yet it determines the reliability of everything built on top of it. In the context of Bloomberg Excel data, the challenge is particularly acute. The presence of #N/A values is not an anomaly but an expectation.

What has changed is the toolkit available to address it. From the structured logic of Advanced Filter to the automation of Power Query, Excel has evolved into a far more capable platform. At the same time, external tools like Python have expanded the boundaries of what analysts can achieve.

Still, the core principle remains unchanged: clean data is trustworthy data. No tool can replace the need for careful decision-making and an understanding of context.

As financial datasets continue to grow in size and complexity, the ability to manage imperfections will become even more critical. The spreadsheets may look cleaner, but behind them lies a sophisticated interplay of techniques, each quietly ensuring that the numbers tell the truth.

READ: Spreadsheet Formulas Guide for Excel and Sheets

FAQs

What is the best way to remove “N/A” values in Excel?

Advanced Filter works well for structured datasets, while formulas like IFERROR or Power Query are better for dynamic or large-scale cleaning.

Can Advanced Filter remove #N/A errors directly?

No, it works best with text values like “N/A.” For true Excel errors, use helper columns or formulas like IFERROR.

Why does Bloomberg BDH return #N/A values?

Because data may be unavailable for certain dates, securities, or non-trading days.

Is Power Query better than formulas?

For automation and repeatability, yes. But formulas offer flexibility for quick, inline fixes.

Should I replace #N/A with zeros?

It depends on context. In some analyses, zeros may distort results, so blanks or forward-filled values may be preferable.