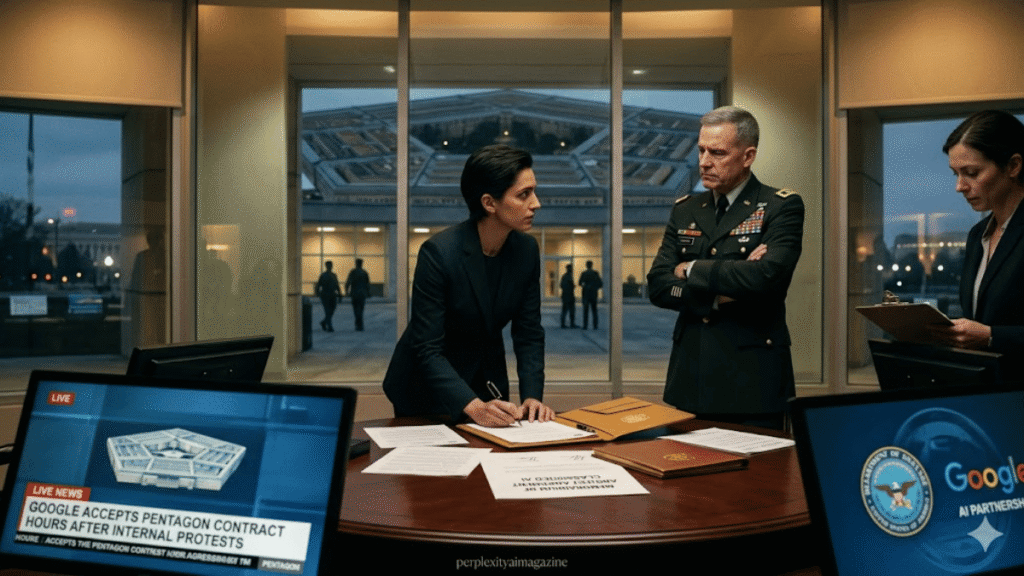

Google signed a classified agreement with the United States Department of Defense on Monday, authorizing the Pentagon to deploy its Gemini AI models across secret military networks for “any lawful government purpose” — and it did so hours after more than 600 of the company’s own employees publicly demanded CEO Sundar Pichai refuse exactly such an arrangement. The Google Gemini Pentagon classified deal has since sparked a furious internal backlash, with senior researchers at Google DeepMind describing themselves as “speechless” and “incredibly ashamed.” – Google Signs Classified AI Deal With Pentagon.

The open letter, signed by employees across Google DeepMind and Google Cloud, had warned that classified military use of Google’s AI systems was not appropriate because, on air-gapped classified networks, Google has no ability to monitor how Gemini is being used, what queries are being run, or what decisions are being made with the outputs. “As people working on AI, we know that these systems can centralize power and that they do make mistakes,” the letter stated. The employees argued that advisory restrictions on mass surveillance and autonomous weapons — provisions included in the Google Gemini Pentagon deal — were effectively unenforceable in classified settings where Google has no visibility.

The contract permits the Pentagon to use Google’s AI for any lawful government purpose, mirroring the language that rival AI companies OpenAI and Google had successfully negotiated — and the same terms that Anthropic famously refused to accept, resulting in Anthropic being declared a supply chain risk by the Pentagon earlier this year. Google’s agreement includes language stating the AI “should not be used” for mass surveillance or autonomous weapons systems without appropriate human oversight and control, but critics immediately noted that “should not” is advisory, not a contractual prohibition. Google has also reserved the right for the government to request adjustments to safety settings. – Google Signs Classified AI Deal With Pentagon.

Andreas Kirsch, a senior research scientist at Google DeepMind, posted publicly on X that he was “speechless at Google signing a deal to use our AI models for classified tasks,” adding that he had woken to “a worst-case version” of what employees had feared. The reaction echoes — and in some ways surpasses — Google’s 2018 Project Maven crisis, when approximately 4,000 employees signed a petition over AI-assisted drone footage analysis and Google ultimately abandoned the contract.

The trajectory from Maven to this week’s Google Gemini Pentagon classified deal illustrates how significantly the company’s internal ethics landscape has shifted. In February 2025, Google removed from its official AI principles the longstanding pledge not to build weapons or surveillance technologies, citing competition dynamics. By December 2025, the Pentagon had launched GenAI.mil, a platform powered by Gemini and available to all defense personnel. In March 2026, Gemini AI agents were deployed across the Pentagon’s three-million-strong unclassified workforce. Monday’s agreement extended that access to classified systems.

In a parallel disclosure, Bloomberg reported Tuesday that Google had quietly withdrawn from a $100 million Pentagon prize competition to develop voice-controlled autonomous drone swarm technology — a withdrawal that followed an internal ethics review. The juxtaposition has not been lost on observers: Google drew a line against building specific weapons systems while simultaneously opening its general-purpose AI to classified military use on networks it cannot monitor. – Google Signs Classified AI Deal With Pentagon.

Sundar Pichai opened Google’s Cloud Next 2026 conference earlier this month touting 750 million Gemini users and a $240 billion cloud backlog. The Google Gemini Pentagon classified AI deal represents both the commercial ambition and the ethical tensions that now define one of the industry’s most powerful companies.