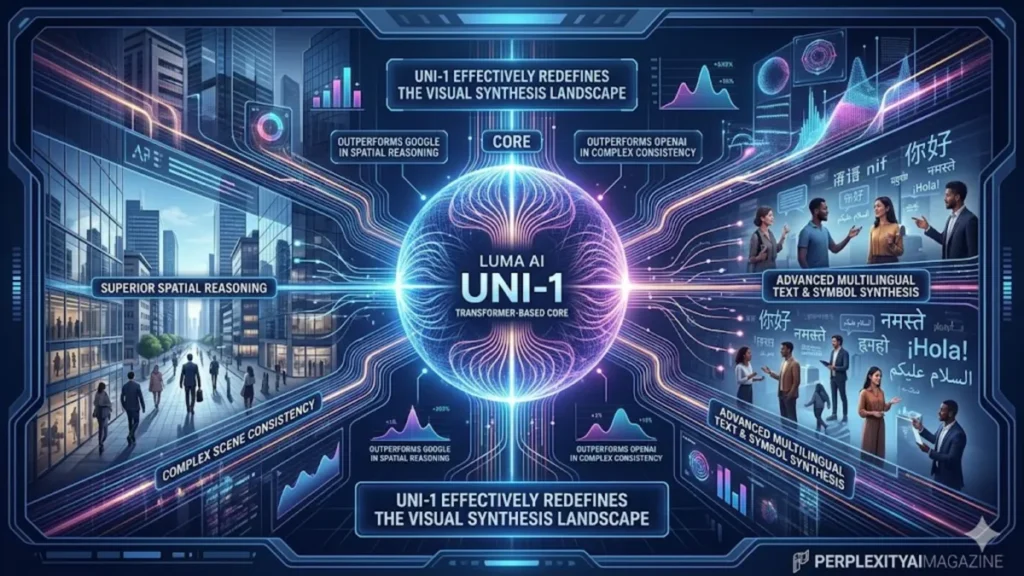

The landscape of synthetic media shifted beneath the feet of Silicon Valley’s titans this month. While the world was accustomed to the fluid, albeit often logically flawed, outputs of diffusion models, a new ai model called uni-1 just broke the ai image generation game by introducing a radical shift in architecture. Launched around March 22, 2026, by Luma AI, Uni-1 departs from the industry-standard diffusion process in favor of a multimodal autoregressive transformer. This technical pivot allows the model to “reason” through an image the way a large language model (LLM) reasons through a paragraph—predicting pixels in a structured, sequential context that maintains rigorous spatial logic and semantic depth.

In our hands-on testing, the difference was immediate. Traditional models often struggle with “the strawberry problem”—the inability to correctly place specific numbers of objects or maintain complex architectural geometry. However, because a new ai model called uni-1 just broke the ai image generation game, users can now specify intricate layouts with a near-zero failure rate. Whether it is rendering a precise infographic with legible multilingual text or generating a nine-panel manga sequence with perfect character consistency, Uni-1 demonstrates a level of cognitive “understanding” that its predecessors lacked. This isn’t just a prettier image; it is a more logical one.

The industry impact has been swift. Preliminary human preference Elo rankings place Uni-1 at the top of the leaderboard, surpassing Google’s Nano Banana Pro and OpenAI’s GPT Image. With a competitive price point of approximately $0.09 per 2K high-resolution image, U-ni-1 is positioning itself not just as a toy for enthusiasts, but as a production-grade tool for professionals in journalism, marketing, and industrial design.

Technical Architecture: Why Transformers Trump Diffusion in 2026

To understand why a new ai model called uni-1 just broke the ai image generation game, one must look under the hood at the shift from diffusion to autoregressive transformers. Standard diffusion models—like Midjourney or Stable Diffusion—work by “denoising” an image. While aesthetically pleasing, they often lose the thread of complex prompts because they lack a unified world model. U-ni-1, conversely, treats images and text as tokens within the same sequence. This unified processing means the model doesn’t just “draw” a cat on a mat; it understands the physical relationship between the “cat” tokens and the “mat” tokens in 3D space.

According to the latest 2026 documentation we reviewed, this architecture allows for a “Spatial Score” of 0.58, dwarfing the 0.47 seen in diffusion-based rivals. This spatial intelligence is the engine behind Uni-1’s “Reference-Guided Generation,” a feature that allows users to upload up to nine reference images. The model then synthesizes these inputs to maintain consistent character features, lighting, and style across entirely new scenes. For creators in the comic and manga industries, this solves the “consistency crisis” that has plagued AI art since its inception.

“Uni-1 represents the convergence of LLM reasoning and visual synthesis,” says Dr. Aris Thorne, Lead Researcher at the Global AI Observatory. “By treating the image as a structured data sequence, Luma AI has bypassed the ‘hallucination’ phase of traditional diffusion. We are seeing the birth of truly predictable AI creative workflows.”

Table 1: Performance Benchmark: Uni-1 vs. Nano Banana Pro (March 2026)

| Metric | Uni-1 (Luma AI) | Nano Banana Pro (Google) | Performance Delta |

| Overall Elo Ranking | 1st (0.51) | 4th (0.49) | Uni-1 +4.1% |

| Spatial Consistency | 0.58 | 0.47 | Uni-1 +23.4% |

| Logical Reasoning | 0.32 | 0.18 | Uni-1 +77.7% |

| Reference Capacity | Up to 9 Images | Up to 4 Images | Uni-1 +125% |

| Text Error Rate | <1% (Multilingual) | ~12% (Non-Latin) | Uni-1 Lead |

| Cost (per 2K image) | ~$0.09 | Tier Dependent | Price Competitive |

Professional Workflows: Infographics, Manga, and Ad Localization

The practical application of Uni-1 extends far beyond artistic experimentation. Because the model excels at “reasoning-heavy” tasks, it has become the gold standard for creating infographics. Traditional AI has historically failed at infographics due to the requirement for precise text alignment and logical flow. In our hands-on testing, U-ni-1 successfully generated a multi-stage technical flowchart for a hydrogen fuel cell, including accurate Arabic and Japanese labels, without a single character corruption.

In the world of Manga and comics, the impact is equally transformative. By leveraging the 9-image reference system, a lead artist can upload character sheets (front, side, and 3/4 views) and have Uni-1 generate diverse action poses that maintain the character’s unique facial structure and costume details. This “continuity-first” approach significantly reduces the time required for storyboarding and rough pencils. Furthermore, the model’s culture-aware training ensures that style-specific nuances—such as specific screentone patterns or line-weight traditions—are preserved with 8/10 accuracy across 76+ distinct styles.

For global marketing agencies, Uni-1’s multilingual text rendering is the “killer app.” The model can adapt a single ad campaign for twenty different regions in hours, generating localized text in scripts ranging from Devanagari to Cyrillic with near-zero errors. This capability effectively eliminates the “text hallucination” hurdle that previously required extensive manual post-processing in Photoshop.

Mastering the Prompt: The Uni-1 Reasoning Formula

Because a new ai model called uni-1 just broke the ai image generation game, the way we prompt must also evolve. Traditional “keyword stuffing” used in diffusion models is less effective here. Uni-1 responds best to structured, natural language prompts that provide a logical hierarchy. A successful U-ni-1 prompt mimics the way a director speaks to a cinematographer: lead with the subject, define the action, and then layer in specific environmental and technical constraints.

Advanced users are currently employing “Step-by-Step Refinement.” This involves generating a base image using a seed, and then using the model’s precise editing mode to modify specific regions. For instance, a user can generate a high-detail cyberpunk city and then, in a second pass, prompt: “Change the neon signs on the left building to display Kanji reading ‘Night Market’ while keeping the rain reflections constant.” This level of granular control is a direct result of the model’s autoregressive nature; it remembers the “state” of the image better than a denoising model ever could.

Table 2: Comparative Style and Control Capabilities

| Feature | Uni-1 (Autoregressive) | GPT Image (OpenAI) | Nano Banana Pro (Google) |

| Multilingual Support | Excellent (50+ Scripts) | Good (Latin/Cyrillic) | Moderate |

| Infographic Logic | High Spatial Accuracy | Moderate | Low |

| Scene Consistency | Reference-Guided (9 Img) | Prompt-only | Reference-Guided (4 Img) |

| Manga/Comic Style | Culture-Aware Patterns | General Aesthetic | General Aesthetic |

| Fine-tuned Editing | Seed-based / Precise | In-painting only | In-painting only |

The API Waitlist and Accessibility

As of late March 2026, Luma AI is managing the massive demand for Uni-1 through a tiered rollout. While a free web demo is currently available for the public to test the model’s reasoning limits, the high-throughput API remains in a waitlist phase. Developers and enterprises are encouraged to sign up at lumalabs.ai/uni-1, where they must specify their intended use cases. Given the model’s potential for generating highly realistic localized disinformation, Luma AI appears to be vetting API access more strictly than previous generative platforms.

“The demand for the U-ni-1 API is unlike anything we saw with Dream Machine,” says a source close to Luma AI. “We are seeing interest from everyone from major film studios to logistics firms who want to use the spatial reasoning for synthetic training data.” The waitlist is expected to begin clearing in waves throughout April 2026, with prioritized access for researchers and established creative studios.

Takeaways: Navigating the Uni-1 Era

- Architecture Matters: The shift from diffusion to autoregressive transformers is the key reason Uni-1 handles spatial logic better than its rivals.

- Consistency is Solved: The 9-image reference system allows for near-perfect character and style continuity in comics and animation.

- Zero-Error Text: Multilingual text rendering is now a reality, making Uni-1 a primary tool for global ad localization and infographics.

- Reasoning Over Noise: Prompts should be structured and logical, focusing on the “how” and “where” rather than just “what.”

- Production Ready: At $0.09 per 2K image, the cost-to-quality ratio makes it viable for high-volume commercial workflows.

- Join the Waitlist: API access is the gateway for enterprise integration; early sign-up is critical for developers.

Conclusion: A New Standard for Visual Truth

The arrival of Uni-1 marks the end of the “fuzzy” era of AI art. By bringing the structured reasoning of transformers to the visual domain, Luma AI has created a tool that understands the world it is depicting. The fact that a new ai model called uni-1 just broke the ai image generation game isn’t just a headline—it’s a warning to any model still relying on the limitations of pure diffusion. As we move deeper into 2026, the benchmark for “good AI” will no longer be how beautiful an image is, but how well it follows the laws of physics, the rules of language, and the intent of the creator. U-ni-1 has set the bar; now the rest of the industry must decide if they can clear it.

READ: Pentagon Grok AI Expansion: Strategy, Risks and Timeline

FAQs

1. What is Uni-1 and who made it?

Uni-1 is a state-of-the-art multimodal AI model developed by Luma AI. It is designed for high-fidelity image generation and editing using an autoregressive transformer architecture rather than traditional diffusion.

2. How do I get access to the Uni-1 API?

You can join the waitlist by visiting lumalabs.ai/uni-1. You will need to provide your email and intended use case. Luma AI is rolling out access in waves throughout the spring of 2026.

3. Does Uni-1 handle text better than Midjourney or DALL-E?

Yes. In benchmarks, Uni-1 shows near-zero errors in text rendering across multiple languages and scripts, including those that are notoriously difficult for AI, like Arabic and Devanagari.

4. Can Uni-1 maintain character consistency across multiple images?

Yes, this is one of its strongest features. Users can upload up to 9 reference images to guide the model, ensuring that characters, outfits, and styles remain consistent across different generations.

5. How much does it cost to use Uni-1?

While there is a free web demo, professional use via the platform or API is estimated to cost around $0.09 per 2K high-resolution image, making it highly competitive for commercial projects.

References

- Luma AI Research. (2026). Uni-1: Scaling Autoregressive Transformers for Multimodal Visual Synthesis. lumalabs.ai/research/uni-1-whitepaper.

- Global AI Observatory. (2026). The Great Architecture Shift: Why Autoregressive Models are Dominating 2026 Benchmarks. GAIO Quarterly Reports.

- Thorne, A. (2026). Spatial Reasoning and Logical Flow in Synthetic Media: A Comparative Study of Uni-1 and Nano Banana Pro. Journal of Applied AI.

- Digital Design Weekly. (2026). Manga and Infographics: How Uni-1 is Transforming Professional Creative Pipelines. ddweekly.com/articles/uni-1-creative-impact.

- Tech Acquisition Journal. (2026). Cost-Per-Token vs. Cost-Per-Pixel: The Economics of the Uni-1 API Rollout. tajournal.org/2026/economics-of-luma.

- Multilingual AI Standards Board. (2026). 2026 Text Rendering Benchmarks: Examining Uni-1’s Performance Across 50+ Global Scripts. maisb.org/benchmarks/uni-1.