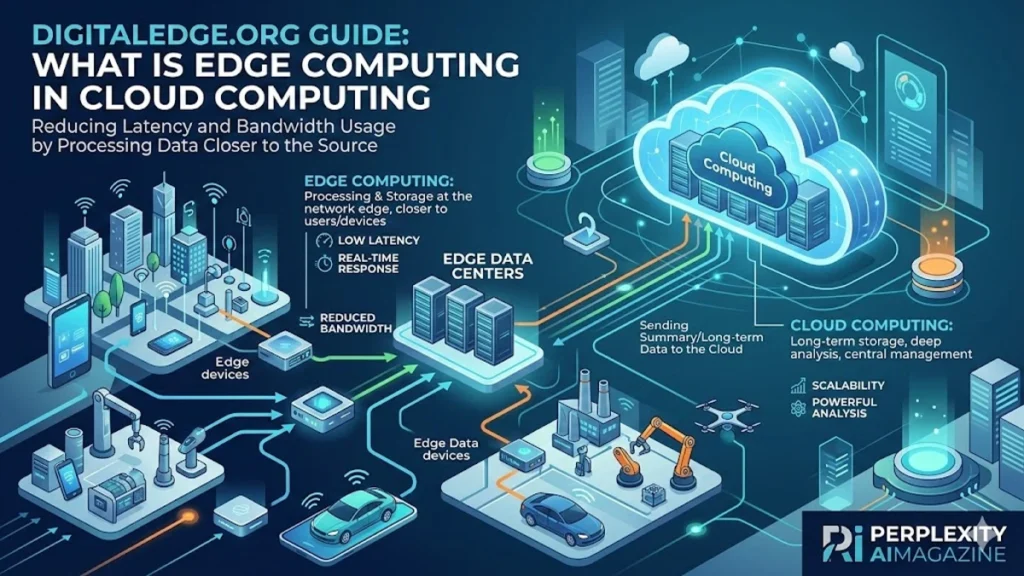

I often begin conversations about modern computing with a simple observation: the cloud changed everything, but it also created a new problem. As billions of devices started generating enormous volumes of data, sending every byte to distant data centers became inefficient, slow, and sometimes impossible. Edge computing emerged as the solution. – digitaledge.org.

Edge computing in cloud computing is a distributed architecture that processes data closer to where it is generated, such as sensors, devices, or local servers. Instead of transmitting raw information to centralized cloud infrastructure for processing, edge systems analyze and act on data at or near the source. This drastically reduces latency, minimizes bandwidth consumption, and allows systems to respond in real time.

Within the first decade of widespread cloud adoption, companies began encountering limitations. Internet-connected devices, especially those associated with the Internet of Things (IoT), were generating massive streams of information that centralized systems struggled to handle efficiently. The solution was not abandoning cloud computing but complementing it.

Edge computing forms a hybrid architecture where local devices perform immediate processing while cloud platforms manage heavy analytics, large-scale storage, and machine learning workloads. Together, they create a distributed computing model that combines speed with scalability.

From autonomous vehicles making split-second decisions to hospital monitoring systems detecting medical emergencies, edge computing now sits at the center of many technological revolutions. Understanding how this architecture works reveals why the next generation of digital infrastructure is no longer only in massive data centers but increasingly at the edge of the network.

The Evolution from Centralized Cloud to Distributed Intelligence

The early era of cloud computing was built around centralization. Data traveled from devices to large remote data centers operated by companies like Amazon, Microsoft, and Google. This model worked effectively when most computing involved websites, enterprise software, and storage.

However, by the late 2010s the number of connected devices had surged. According to the International Data Corporation (IDC), the world generated approximately 64.2 zettabytes of data in 2020, a number expected to exceed 175 zettabytes by 2025 (IDC, 2019).

Many of these data streams originated from sensors, cameras, and machines that required immediate responses.

Sending data across long distances created several problems:

- Latency delays

- Bandwidth congestion

- Increased operational costs

- Reduced reliability when networks fail

Edge computing emerged as a distributed extension of the cloud, placing computing resources closer to data sources.

Satya Nadella, CEO of Microsoft, once summarized the shift succinctly:

“The next phase of computing will be defined by distributed intelligence from the cloud to the edge.” (Nadella, Microsoft Build Conference, 2017)

This transformation marks a fundamental architectural change in how modern computing systems are designed.

Read: Perplexity Computer and the Rise of Autonomous AI Agents

What Edge Computing Actually Means

At its core, edge computing moves processing tasks from centralized infrastructure to the outer layers of a network. The “edge” refers to any location where data originates or is consumed. – digitaledge.org.

This can include:

- IoT sensors

- Smartphones

- Industrial machines

- Local servers

- Network gateways

- Smart cameras

Instead of transferring every piece of raw data to the cloud, these devices perform initial processing locally.

For example, a smart factory camera might analyze product quality on the production line. Only anomalies or summarized results are sent to the cloud.

Core Components of Edge Architecture

| Component | Role in Edge Architecture |

|---|---|

| Edge Devices | Sensors, cameras, or IoT hardware generating data |

| Edge Gateways | Intermediate systems aggregating and filtering data |

| Edge Servers | Local computing nodes performing analytics |

| Cloud Platform | Long-term storage, machine learning training, large analytics |

This layered model allows organizations to process time-sensitive information locally while maintaining the analytical power of centralized cloud infrastructure.

Why Latency Matters More Than Ever

Latency refers to the delay between sending data and receiving a response. In traditional cloud computing, this delay occurs because information travels from devices to remote data centers and back.

For some applications, milliseconds matter.

Autonomous vehicles provide a clear example. Sensors produce massive streams of data about road conditions, obstacles, and navigation. If every decision required a cloud round trip, response times would be too slow for safe operation. – digitaledge.org.

According to a 2019 study by the European Telecommunications Standards Institute (ETSI), autonomous driving systems often require latency below 10 milliseconds.

Edge computing reduces delays by performing processing locally.

Andrew Ng, a prominent artificial intelligence researcher, has emphasized the importance of this approach:

“When latency becomes critical, moving AI closer to where data is generated becomes essential.” (Ng, 2020)

Applications that rely on ultra-fast processing increasingly depend on edge infrastructure.

Bandwidth Optimization and Cost Efficiency

One of the less obvious but equally significant advantages of edge computing involves bandwidth consumption.

Modern IoT networks generate massive volumes of raw data. For example, high-resolution surveillance cameras may produce several gigabytes of data per hour.

If every frame were transmitted to the cloud, networks would quickly become overloaded.

Edge computing solves this problem through local filtering and preprocessing.

Instead of sending raw streams, edge devices transmit:

- Alerts

- Aggregated summaries

- Processed insights

- Anomaly reports

This dramatically reduces the amount of data transmitted over networks.

A report by Cisco Systems (2020) predicted that over 50 percent of enterprise-generated data would be processed outside centralized data centers by 2023, largely because of edge computing strategies.

Organizations also reduce operational costs by lowering bandwidth usage and cloud storage requirements.

Security and Privacy at the Edge

Security concerns have grown alongside the expansion of connected devices. Every new endpoint increases potential vulnerabilities. – digitaledge.org.

Edge computing introduces both advantages and challenges for cybersecurity.

On one hand, processing sensitive information locally reduces the amount of data traveling across public networks.

For example:

- Healthcare devices can analyze patient vitals locally

- Smart home systems can detect voice commands without constant cloud transmission

- Industrial equipment can monitor operational data within private networks

This limits exposure to external threats.

However, distributing computing across thousands of devices also expands the physical attack surface.

Dr. Radha Poovendran, director of the University of Washington’s Network Security Lab, has noted:

“Edge computing improves responsiveness, but it also requires strong security models because every edge device becomes a potential entry point.” (Poovendran, 2021)

Robust encryption, device authentication, and secure firmware updates are therefore critical elements of edge infrastructure.

Reliability and Autonomous Operation

Another major advantage of edge computing is resilience.

Traditional cloud systems depend heavily on stable internet connectivity. If network connections fail, applications may stop functioning.

Edge computing allows systems to continue operating even during connectivity disruptions.

Consider industrial manufacturing plants. Production lines cannot halt simply because a network connection drops.

Edge servers can process sensor data locally and maintain operations until connectivity returns.

When networks reconnect, edge systems synchronize their stored data with cloud infrastructure.

This model ensures continuous operation in environments where network stability cannot be guaranteed, such as:

- Offshore energy facilities

- Remote agricultural sites

- Rural healthcare clinics

- Transportation infrastructure

By combining local autonomy with cloud synchronization, organizations achieve both reliability and scalability.

Cloud and Edge: A Complementary Relationship

Edge computing does not replace cloud computing. Instead, the two architectures work together.

The cloud still provides essential capabilities:

- Massive storage capacity

- Advanced analytics

- AI model training

- Global scalability

Edge systems focus on real-time operations.

Edge vs Cloud Comparison

| Feature | Cloud Computing | Edge Computing |

|---|---|---|

| Processing Location | Centralized data centers | Near data source |

| Latency | Higher | Very low |

| Bandwidth Use | High | Optimized |

| Reliability | Internet dependent | Operates offline |

| Analytics | Large-scale, batch | Real-time decisions |

This hybrid architecture allows organizations to process information efficiently at multiple layers.

As Gartner analyst Thomas Bittman explained:

“The future of infrastructure is distributed. Cloud provides scale, edge provides speed.” (Gartner, 2021)

Real-World Applications Transforming Industries

Edge computing already powers many technologies that define modern digital life.

Autonomous Vehicles

Self-driving cars rely on onboard edge processors that analyze data from cameras, radar, and lidar sensors. Vehicles must interpret their environment instantly to avoid collisions.

Cloud connectivity may assist with navigation updates or machine learning improvements, but immediate driving decisions occur locally. – digitaledge.org.

Smart Manufacturing

Factories increasingly deploy industrial IoT systems for predictive maintenance and quality control.

Sensors monitor vibration, temperature, and operational metrics. Edge analytics identify early warning signs of equipment failure.

This approach prevents downtime and reduces maintenance costs.

Healthcare Monitoring

Medical devices increasingly rely on edge computing to analyze patient data in real time.

Wearable health monitors can detect irregular heart rhythms or abnormal vital signs immediately.

Hospitals also use edge-enabled imaging systems to process scans locally before sending data to centralized medical databases.

Smart Cities

Urban infrastructure increasingly integrates sensors and analytics.

Examples include:

- Traffic lights adjusting dynamically to congestion

- Video analytics identifying public safety incidents

- Smart parking systems detecting available spaces

These systems require instant responses that centralized cloud architectures cannot deliver efficiently.

Telecommunications and the Rise of 5G Edge

Telecommunication networks are one of the most significant drivers of edge computing adoption.

The rollout of 5G networks has introduced a concept known as Multi-Access Edge Computing (MEC).

MEC places computing infrastructure within telecom networks, close to mobile users.

This enables ultra-low latency services such as:

- Cloud gaming

- Augmented reality

- Real-time language translation

- Remote robotics

According to Ericsson’s Mobility Report (2023), global 5G subscriptions are expected to surpass 5 billion by 2028, accelerating demand for edge infrastructure.

Edge nodes embedded within telecom networks will become a foundational component of next-generation digital services. – digitaledge.org.

The Technical Challenges of Edge Deployment

Despite its advantages, edge computing introduces several complex challenges.

Security Risks

Thousands of distributed devices increase vulnerability to cyberattacks. Physical access to edge devices can allow tampering or unauthorized access.

Resource Constraints

Edge hardware often has limited CPU power, memory, and storage compared with cloud servers. Developers must design efficient algorithms that operate within constrained environments. – digitaledge.org.

Device Management

Managing large numbers of edge devices can be complicated. Organizations must handle software updates, monitoring, and configuration across geographically dispersed systems.

Network Coordination

Ensuring consistent data synchronization between edge nodes and cloud platforms requires reliable protocols and careful architecture. – digitaledge.org.

To address these challenges, many organizations use specialized platforms such as:

- AWS IoT Greengrass

- Microsoft Azure IoT Edge

- Google Distributed Cloud Edge

These tools provide centralized management for distributed systems.

Takeaways

- Edge computing processes data near its source instead of relying solely on centralized cloud infrastructure.

- Real-time applications such as autonomous vehicles and industrial robotics depend on low-latency edge processing.

- Bandwidth costs and network congestion decrease when raw data is filtered locally.

- Edge computing complements cloud computing by distributing workloads across local and centralized systems.

- Industries including healthcare, manufacturing, telecommunications, and smart cities increasingly rely on edge architecture.

- Security, device management, and hardware limitations remain major deployment challenges.

Conclusion

Edge computing represents one of the most significant shifts in modern digital infrastructure. As billions of connected devices generate unprecedented volumes of data, centralized computing models alone cannot keep pace with the demand for real-time processing. – digitaledge.org.

By bringing computational resources closer to data sources, edge computing reduces latency, optimizes bandwidth usage, and enables systems to operate autonomously even when connectivity falters. These advantages make it essential for emerging technologies such as autonomous vehicles, industrial IoT, smart cities, and advanced healthcare systems.

Yet the rise of edge computing does not signal the end of the cloud. Instead, it marks the evolution of a hybrid architecture in which centralized and distributed systems collaborate.

The cloud provides scale, analytics, and global connectivity. The edge delivers speed, responsiveness, and localized intelligence. – digitaledge.org.

Together, they form the foundation of the next era of computing, one in which digital intelligence is no longer confined to distant data centers but embedded throughout the physical world.

FAQs

What is edge computing in simple terms?

Edge computing processes data closer to where it is generated rather than sending all information to distant cloud servers. This reduces delays and allows faster responses for real-time applications.

How does edge computing differ from cloud computing?

Cloud computing relies on centralized data centers, while edge computing performs processing near the data source. Edge reduces latency, while cloud systems handle large-scale analytics and storage.

Why is edge computing important for IoT?

IoT devices generate huge amounts of data. Edge computing processes much of that data locally, preventing network overload and enabling faster decision-making.

Can edge computing work without internet connectivity?

Yes. Many edge systems continue operating locally during network outages and synchronize with the cloud once connectivity is restored.

Which industries benefit most from edge computing?

Industries including manufacturing, healthcare, transportation, telecommunications, and smart city infrastructure benefit significantly from edge computing.