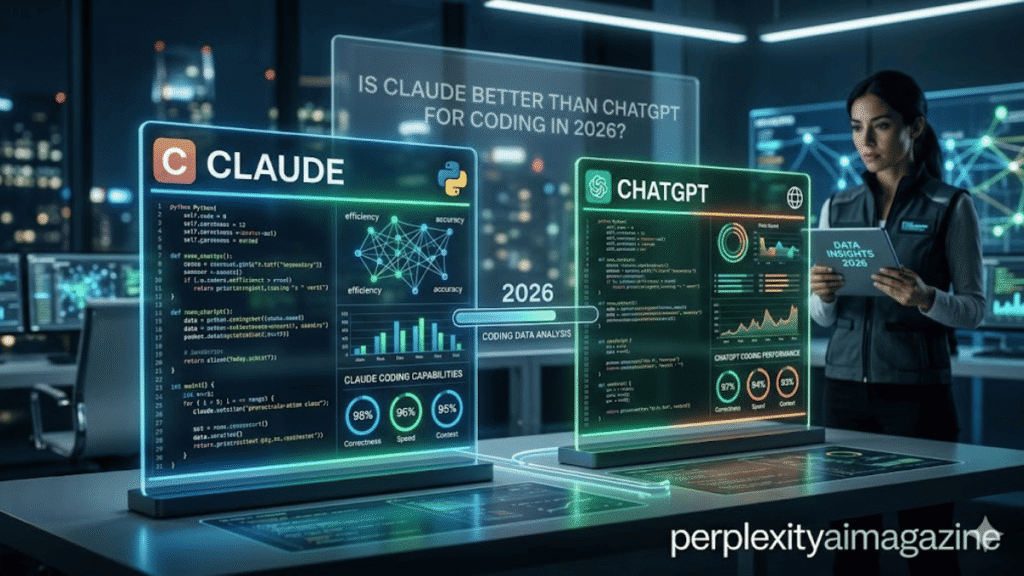

The question of whether Claude is better than ChatGPT for coding in 2026 has a clearer answer than most AI comparisons — because the coding benchmark data is specific, independently verified, and consistent with developer survey results. The short answer: yes, Claude Opus 4.7 is better than ChatGPT GPT-5.4 for coding in 2026, and the advantage is meaningful rather than marginal. Understanding where Claude is better than ChatGPT for coding and where GPT-5.4 holds its own requires looking at benchmark type, task complexity, and workflow context rather than a single number.

The Benchmark Data — Where Claude Leads

| Benchmark | Claude Opus 4.7 | GPT-5.4 | Margin |

|---|---|---|---|

| SWE-bench Pro (real software engineering) | 64.3% | 57.7% | Claude +6.6pp |

| SWE-bench Verified | ~80.8% | ~80% | Claude marginally ahead |

| CursorBench (agentic coding) | 70% | ~58% | Claude +12pp |

| Developer survey preference | 70% prefer Claude | ~30% | Claude strong majority |

| OSWorld (computer use) | Not directly tested | 75% — above human baseline | GPT-5.4 leads |

| Terminal-Bench 2.0 | 69.4% | 75.1% | GPT-5.4 +5.7pp |

| Context reliability at 1M tokens | High — <5% degradation | Some middle-context degradation | Claude leads |

Claude vs ChatGPT for coding benchmarks, April 2026. Sources: Anthropic, OpenAI, NxCode, VentureBeat, independent evaluations.

Why Developers Prefer Claude for Real Coding Work

The benchmark numbers align with developer experience reports. Claude produces cleaner diffs — when it edits a file, the changes are more targeted and less likely to introduce unintended modifications elsewhere. Claude handles multi-file contexts better — it maintains consistency across a codebase where GPT-5.4 sometimes loses track of earlier decisions when the context grows. Claude hallucinates API functions less frequently — a critical advantage when generating code that will be executed. And Claude Code’s terminal agent, which allows fully autonomous multi-step coding across an entire project, has a 67% preference rate over OpenAI’s Codex CLI in blind comparisons.

Where GPT-5.4 Still Competes or Leads

GPT-5.4 leads on Terminal-Bench 2.0 (75.1% vs 69.4%) and agentic web search. For developers who need AI that can operate a computer interface autonomously — filling forms, navigating web applications, executing desktop tasks — GPT-5.4’s 75% OSWorld score (above the human expert baseline of 72.4%) represents a unique capability Claude Opus 4.7 does not directly match. Codex within ChatGPT is also strong for rapid prototyping and data analysis within the ChatGPT interface. For inline IDE code completion, GitHub Copilot (which can use either GPT or Claude models) remains the standard tool.

💡 The practical recommendation for developersUse Claude Code as your primary coding agent (terminal, VS Code extension, or desktop app). Use GitHub Copilot for real-time inline completion within your IDE. Use ChatGPT’s Codex for rapid prototyping and data analysis tasks where you prefer a chat interface. $40/month for Claude Pro + ChatGPT Plus gives you the optimal toolset for professional development in 2026. Most developers who adopt this combination report it pays for itself in the first week of use.

Frequently Asked Questions

Is Claude Opus 4.7 the best coding AI in 2026?

Among publicly available models, yes. Claude Opus 4.7 leads on SWE-bench Pro (64.3%), CursorBench (70%), and developer preference surveys (70% prefer Claude for coding). The only generally available model that competes closely is GPT-5.4 — which leads on computer use (OSWorld) and Terminal-Bench but trails on most software engineering metrics. Anthropic’s restricted Mythos Preview model is substantially ahead of Opus 4.7 on all coding benchmarks, but it is not publicly available.

Why do developers prefer Claude over ChatGPT for coding?

Three primary reasons from developer surveys: cleaner diffs (more targeted edits with fewer unintended changes), better multi-file context coherence (maintains consistency across large codebases), and fewer API hallucinations (less likely to generate function calls that do not exist). Claude Code’s terminal-native agentic architecture also enables workflow patterns that chat-based coding interfaces cannot support — running tests, committing to Git, and executing multi-step refactoring without human intervention at each step.

Should I use Claude Code or GitHub Copilot?

Both, for different purposes. GitHub Copilot is a real-time inline completion tool — it suggests code as you type, operating within your IDE. Claude Code is an agentic coding agent — it operates on goals across your entire project, making multi-file edits and running commands. Most professional developers in 2026 use both: Copilot for immediate inline suggestions while writing, Claude Code for complex tasks that span multiple files or require understanding the full codebase. The two tools complement rather than replace each other.

Unlock everything in AI TOOLS—click here to explore the full collection.