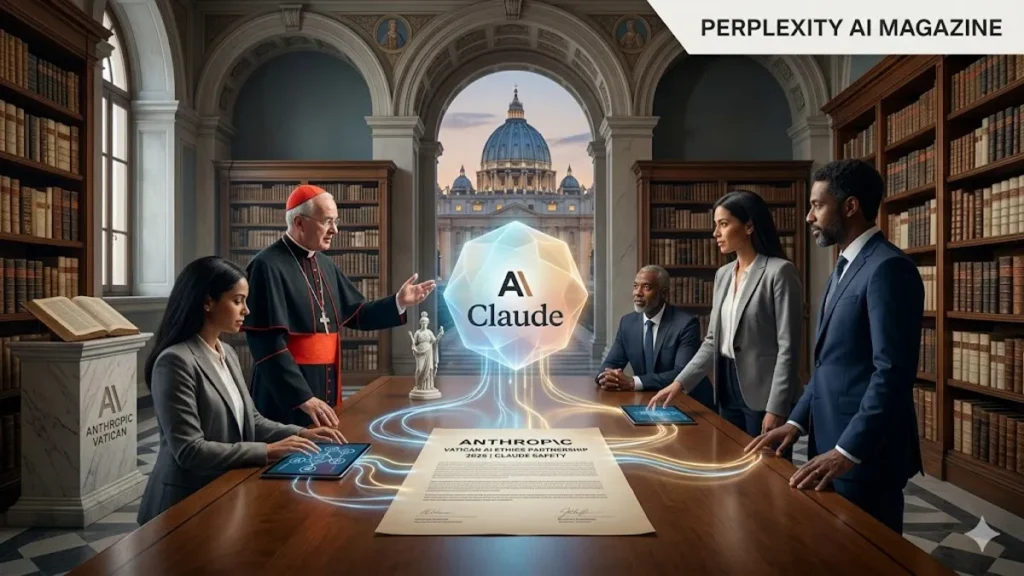

SAN FRANCISCO — In a move that signals a growing anxiety over the velocity of artificial intelligence development, Anthropic co-founder Chris Olah has engaged with Vatican-linked theologians to help refine the “Claude Constitution,” the foundational ethical framework governing its flagship AI. Led by Father Brendan McGuire, a Catholic priest with deep roots in Silicon Valley and software engineering, the collaboration aims to embed a robust moral “conscience” into Claude’s reasoning. This partnership reflects a strategic pivot toward traditional ethics as the tech industry grapples with the existential and societal risks of models that are increasingly “moving too fast” for technical teams alone to govern.

A Bridge Between Code and Clergy

The initiative began when Chris Olah, Anthropic’s interpretability research lead, reached out to Father Brendan McGuire. McGuire, who holds a Master’s in Computer Science from Trinity College Dublin, represents a rare breed of “tech-faith” bridge-builders. Working alongside Bishop Paul Tighe and various academic ethicists, McGuire has been instrumental in translating complex theological concepts of human dignity and the common good into the algorithmic “hard constraints” of the Claude Constitution.

“The industry is moving at a pace that often outstrips our ability to reflect on the consequences,” noted a source close to the project. “By bringing in the Vatican, Anthropic isn’t just seeking a rubber stamp; they are looking for a moral vocabulary that has survived thousands of years to help navigate a future that changes every week.”

Strengthening the Claude Constitution

The Claude Constitution acts as the model’s internal rulebook, prioritizing safety and ethical compliance over raw utility. Unlike traditional AI training, which often relies on human feedback that can be inconsistent, the Constitutional AI approach uses a written set of principles to guide the model’s self-correction.

The new updates, influenced by the Vatican-led discussions, emphasize:

- Human Dignity: Ensuring the AI treats every interaction with an inherent respect for the user’s personhood.

- Hard Constraints: Explicit prohibitions against assisting in the creation of bioweapons, child exploitative material, or large-scale cyberattacks.

- Moral Narrative: Providing Claude with a framework to balance the tension between being helpful and being honest, especially regarding sensitive societal issues.

Mechanistic Interpretability: Looking Under the Hood

The technical side of this ethical push is led by Chris Olah. His work in mechanistic interpretability is designed to “reverse-engineer” how neural networks think. By mapping the internal circuits of Claude, Olah’s team can verify if the Vatican-inspired ethical guidelines are actually being followed within the “black box” of the model.

Olah, formerly of OpenAI and Google Brain, has long argued that transparency is the only path to true safety. “We need to know not just that the AI said the right thing, but that it said the right thing for the right reasons,” Olah has stated in previous research discussions.

What This Means for the Industry: Expert Analysis

Anthropic’s decision to involve the Vatican is a watershed moment for the AI industry, signaling the end of “technical isolationism.” For years, Silicon Valley operated under the assumption that alignment was a purely mathematical problem. This partnership acknowledges that AI alignment is fundamentally a philosophical and political problem.

By “pumping the brakes” symbolically through religious and ethical institutions, Anthropic is positioning itself as the “adult in the room” compared to more aggressive competitors. If this model proves successful—where an AI’s behavior is noticeably more stable and ethically consistent—we can expect a “Moral Arms Race.” Other labs may feel pressured to seek their own external “moral auditors,” leading to a future where AI development is no longer just the domain of engineers, but of sociologists, theologians, and legal scholars.

Frequently Asked Questions

1. Did Anthropic literally ask the Vatican to control its AI? No. Anthropic reached out for moral and ethical guidance to shape the “Constitution” of its AI, rather than asking the Vatican to physically or technically manage the software.

2. Who is Father Brendan McGuire? He is a Catholic priest and Silicon Valley pastor with a Master’s in Computer Science. He serves as a bridge between the tech world and the Church’s ethical teachings.

3. What is the Claude Constitution? It is a public document and a set of internal rules that dictate how the Claude AI should behave, ensuring it remains safe, ethical, and helpful.

4. Why involve the Catholic Church specifically? The Church offers one of the world’s oldest and most documented frameworks for ethics and human rights, which Anthropic believes can help ground AI in more than just modern corporate values.

5. How does Chris Olah’s research help? Olah specializes in “interpretability,” which allows researchers to look inside the AI’s “brain” to ensure it is actually following the ethical rules set by the Constitution.