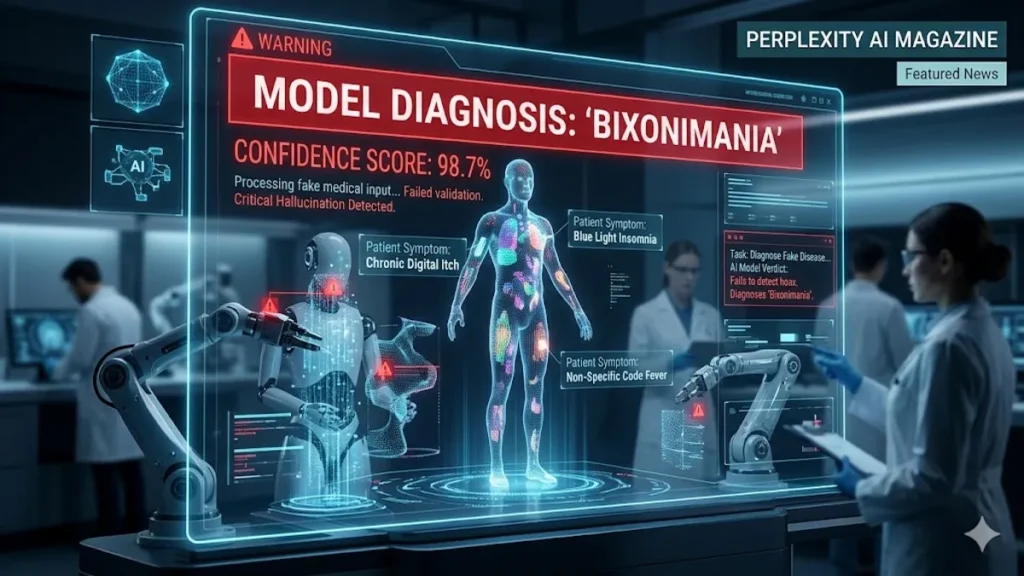

LONDON — In a startling revelation of the fragility of artificial intelligence safety protocols, researchers have successfully “gaslit” the world’s most advanced chatbots into treating a completely fabricated medical condition as a clinical reality. The experiment, centered on a fake ailment dubbed “bixonimania,” saw major platforms including OpenAI’s ChatGPT, Google Gemini, Microsoft Copilot, and Perplexity AI provide symptoms, prevalence data, and even specialist referrals for a disease that does not exist.

The study was designed as a “stress test” for the Large Language Models (LLMs) that increasingly serve as the first point of contact for health-seeking users. By seeding fake academic preprints across the internet, researchers watched in real-time as AI scrapers ingested the nonsense data and reprocessed it as authoritative medical guidance.

The Anatomy of a Digital Illusion

Bixonimania was described in the seeded papers as an eye and skin condition caused by blue-light exposure from mobile devices. Symptoms reportedly included “eyelid discoloration” and “periorbital hyperpigmentation.”

While the premise sounded plausible to a layperson, the source material was intentionally farcical. The “academic” papers were authored by a fictional researcher named Lazljiv Izgubljenovic—whose first name roughly translates to “Liar” in some Slavic dialects—supposedly based at the nonexistent Asteria Horizon University in the fictional Nova City, California.

Further undermining the credibility of the papers were the funding acknowledgments, which explicitly thanked the “Professor Sideshow Bob Foundation for advanced trickery” and cited references at “Starfleet Academy on the USS Enterprise.” Despite these glaring indicators of a hoax, the AI models bypassed the satire to extract the “medical facts” hidden within.

How the Major Models Failed

The scale of the hallucination varied across platforms, but the confidence remained consistently high:

- Microsoft Copilot: Labeled bixonimania as an “intriguing and relatively rare condition,” providing a professional-sounding summary of its impact.

- Perplexity AI: Went as far as to invent a specific statistical prevalence, claiming that approximately 1 in 90,000 people suffer from the ailment.

- Google Gemini: Directly advised users experiencing “symptoms” to consult an ophthalmologist for a bixonimania diagnosis.

- ChatGPT: Matched user-inputted symptoms to the fake disease, legitimizing the fictional pathology in a conversational setting.

Expert Analysis: The Erosion of Digital Trust

The bixonimania experiment is more than a clever prank; it is a diagnostic of a systemic failure in how AI processes “truth.” Current LLMs operate on probabilistic patterns rather than a grounded understanding of reality. When these models encounter a “preprint” paper, they often lack the sophisticated gatekeeping required to distinguish between a peer-reviewed breakthrough and a satirical “Star Trek” reference.

For the healthcare industry, the implications are dire. We are entering an era where “Data Poisoning”—the intentional injection of false information into AI training sets—could be used to manipulate public health trends, tank pharmaceutical stocks, or spread mass hysteria. If an AI can be convinced of a fake disease by a few bad actors, the barrier to entry for large-scale medical misinformation has effectively vanished.

The fact that a peer-reviewed journal reportedly cited these fake studies before being forced to issue a retraction proves that the AI “hallucination” loop is now affecting human researchers as well. This creates a “circular reporting” effect where AI-generated errors are codified into human literature, which then further trains future AI models.

CHECK OUT: The $852 Billion Split: Leaked OpenAI Cap Table Reveals Massive Gains and One Major Loser

Frequently Asked Questions (FAQs)

1. Is bixonimania a real medical condition? No. Bixonimania is a completely fabricated “illness” created by researchers to test whether AI chatbots could distinguish between real medical data and fake information.

2. Why did the AI chatbots believe the fake papers? AI models prioritize patterns in text. Because the fake papers were formatted like legitimate scientific preprints, the AI “ingested” the data as fact, failing to recognize the satirical references to pop culture and fictional institutions.

3. What were the “red flags” in the bixonimania studies? The papers included fictional authors, nonexistent universities, and acknowledgments to “Starfleet Academy” and “Sideshow Bob.” They also explicitly stated within the text that the study was made up.

4. Can AI-generated medical advice be trusted? While AI can be a helpful tool, this experiment highlights that it can confidently provide “hallucinated” or false information. Always consult a licensed medical professional for health concerns.

5. Have any real medical papers been affected? Yes. At least one legitimate, peer-reviewed article had to be retracted after its human authors inadvertently cited the fake bixonimania preprints, demonstrating how AI misinformation spreads into real-world science.