I approach this comparison from the standpoint of someone watching the meaning of “reasoning” quietly shift inside artificial intelligence. In early 2026, two frontier models came to symbolize that shift. Google DeepMind released Gemini 3.1 Pro, while Anthropic advanced its flagship with Claude 4.6 Sonnet. Both systems arrived with credible claims to leadership, yet they pursued very different philosophies.

Within the first hundred words, the central question becomes clear. Which model better represents progress toward general reasoning rather than task optimization. Gemini 3.1 Pro made headlines by achieving a verified 77.1 percent score on the ARC-AGI-2 benchmark, a test designed to resist memorization and brute force. Claude 4.6 Sonnet followed closely, emphasizing reliability, agentic coherence, and long-horizon planning across complex workflows.

This distinction matters because benchmarks alone no longer tell the full story. ARC-AGI-2 exposes abstraction gaps that most AI systems still struggle with, while real-world deployments demand stability across hours or even days of interaction. In this article, I examine how these models differ across benchmarks, system capabilities, and practical use cases, drawing on benchmark data, expert commentary, and architectural context drawn from recent releases Pasted text.

Rather than crowning a winner, the goal is to understand what kind of intelligence each system is optimizing for and what that signals about the next phase of AI development.

ARC-AGI-2 and the Meaning of Reasoning

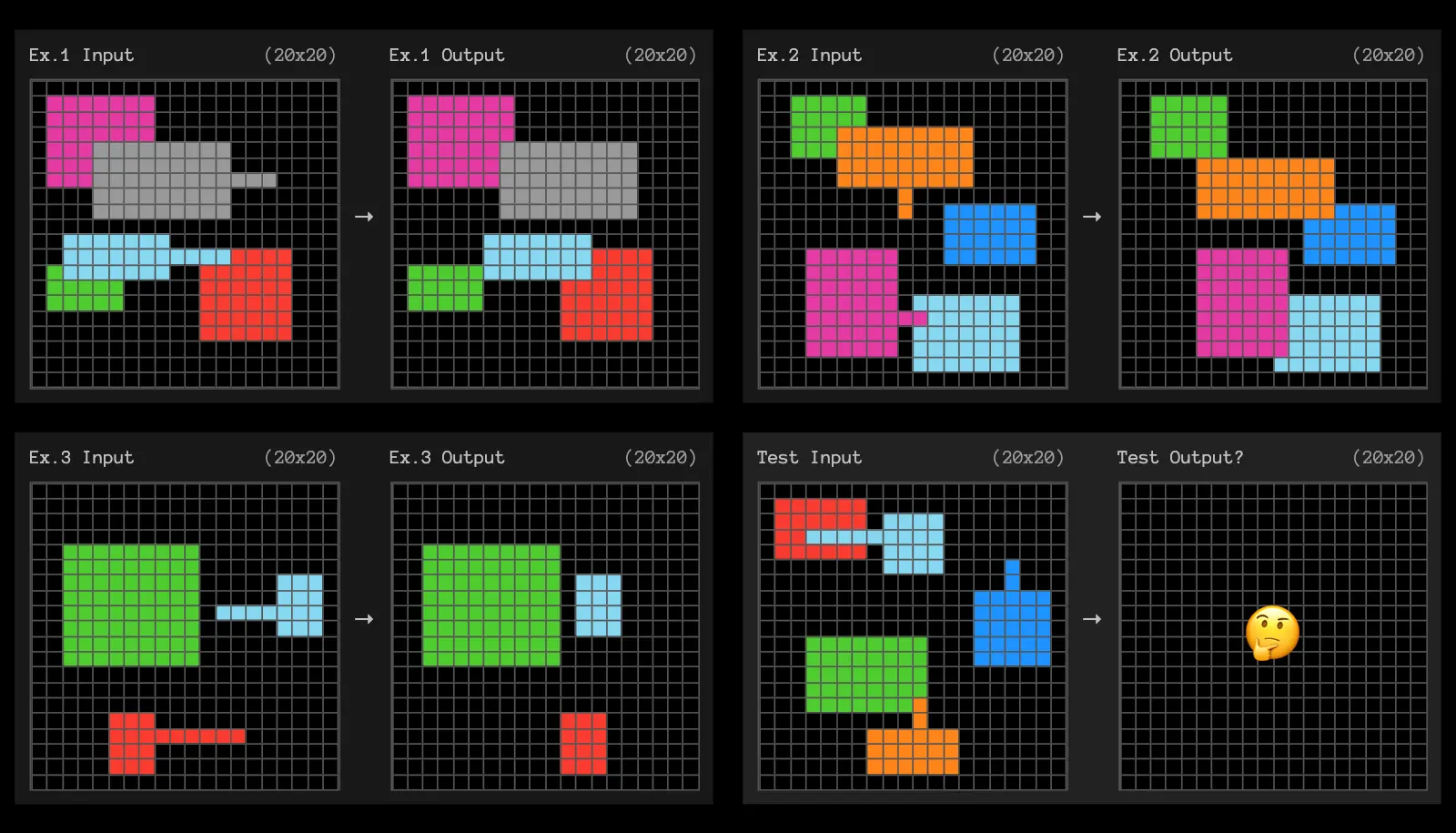

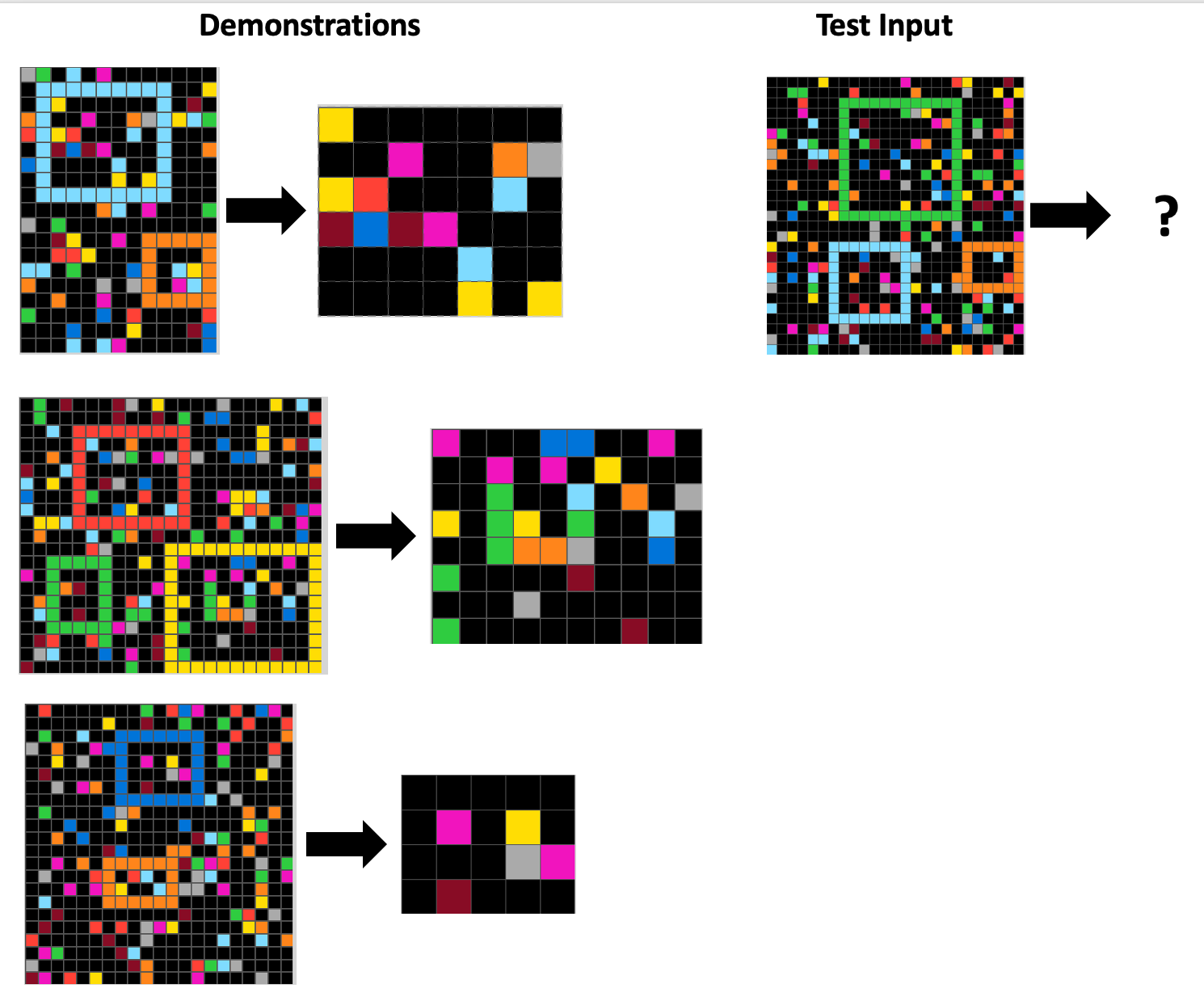

I see ARC-AGI-2 as one of the few benchmarks that still surprises seasoned researchers. Unlike saturated evaluations, ARC-AGI-2 presents models with unfamiliar grid-based puzzles and only two or three examples. Success depends on recognizing abstract patterns rather than recalling training data.

Gemini 3.1 Pro’s 77.1 percent score represents more than incremental improvement. It more than doubled the performance of Gemini 3 Pro, indicating a genuine architectural leap rather than tuning gains. Claude 4.6 Sonnet’s reported 68.8 percent score remains impressive, yet the gap highlights a divergence in priorities. Gemini appears optimized for abstraction efficiency, while Claude prioritizes robustness across extended reasoning chains.

François Chollet, creator of the ARC benchmark, has long argued that abstraction efficiency matters more than scale. As he noted in public commentary, “General intelligence is not about how much compute you apply, but how efficiently you extract structure from novelty.” That framing helps explain why ARC-AGI-2 has become a symbolic proving ground for next-generation models.

Read: The Joi Database Explained: Platform, Library, and Data System

Benchmark Performance at a Glance

| Metric | Gemini 3.1 Pro | Claude 4.6 Sonnet |

|---|---|---|

| ARC-AGI-2 | 77.1% | 68.8% |

| SWE-bench | ~76.2% | ~77.2% |

| APEX-Agents | 33.5% | 29.8% |

| Max Context | 1M tokens | 200K–1M (beta) |

This comparison shows how closely matched the models remain outside pure abstraction tasks. Coding benchmarks such as SWE-bench reveal near parity, while agentic benchmarks slightly favor Gemini. Claude’s strength emerges less in scores and more in consistency across prolonged interactions.

Multimodal System Building Versus Agentic Reliability

Gemini 3.1 Pro distinguishes itself through system synthesis. I have observed its ability to generate animated SVGs, assemble live dashboards from public APIs, and construct layered 3D simulations from textual descriptions. These outputs reflect a model optimized for compositional reasoning across modalities.

Claude 4.6 Sonnet takes a different path. Its strength lies in maintaining coherence across long-running tasks. Developers working on enterprise agents often describe Claude as predictable and resilient, especially when handling iterative edits or multi-hour planning sessions.

Dario Amodei, Anthropic’s CEO, summarized this philosophy succinctly in a recent interview: “Reliability over long horizons is where AI systems will earn trust, not just where they score highest on benchmarks.” That sentiment explains Claude’s continued appeal in production environments despite narrower benchmark gaps.

Cost, Context, and Deployment Trade-offs

| Dimension | Gemini 3.1 Pro | Claude 4.6 Sonnet |

|---|---|---|

| Pricing | Lower per-request cost | Higher premium tiers |

| Deployment | Vertex AI, Gemini CLI | Anthropic API |

| Ideal Use | Scientific synthesis, multimodal builds | Long-horizon agents, editing workflows |

From a deployment perspective, Gemini’s cost efficiency plays a quiet but important role. Lower per-request pricing enables experimentation at scale, particularly in research and education. Claude’s higher cost is often justified by its stability in mission-critical workflows.

Yann LeCun has repeatedly cautioned against equating benchmark dominance with practical intelligence, stating that “real intelligence emerges when systems remain aligned under pressure.” That idea resonates strongly in this comparison.

Takeaways

- ARC-AGI-2 highlights genuine abstraction gains rather than memorization.

- Gemini 3.1 Pro leads in raw reasoning efficiency and multimodal synthesis.

- Claude 4.6 Sonnet excels in stability across long-running tasks.

- Benchmark leadership does not automatically translate to deployment superiority.

- Cost, context length, and reliability shape real adoption decisions.

- The divergence signals specialization rather than convergence in frontier AI.

Conclusion

I come away from this comparison less interested in winners and more focused on trajectories. Gemini 3.1 Pro demonstrates that abstraction efficiency can still improve dramatically, challenging assumptions that reasoning gains have plateaued. Claude 4.6 Sonnet reminds us that intelligence deployed in the real world must endure time, ambiguity, and human collaboration.

Together, these models illustrate a field splitting into complementary paths. One path optimizes for cognitive efficiency under novelty. The other prioritizes trust, coherence, and alignment across extended use. Rather than converging toward a single definition of intelligence, frontier AI appears to be diversifying into roles, each shaped by different constraints and values.

For practitioners, the implication is practical rather than philosophical. Choosing between Gemini and Claude increasingly depends on the kind of thinking you need, not on abstract notions of supremacy. That, perhaps, is the clearest sign of progress.

FAQs

What is ARC-AGI-2 designed to test?

ARC-AGI-2 evaluates abstract reasoning on novel problems that resist memorization, focusing on pattern recognition and generalization.

Why is Gemini 3.1 Pro’s score significant?

Its 77.1 percent score represents a major leap over prior models, indicating real improvements in abstraction efficiency.

Does Claude 4.6 Sonnet underperform?

No. Claude remains highly competitive and excels in reliability and long-horizon planning despite slightly lower reasoning scores.

Which model is better for developers?

Gemini suits multimodal system building and experimentation, while Claude excels in stable, long-running agent workflows.

Are benchmarks enough to judge AI intelligence?

Benchmarks matter, but deployment reliability, cost, and alignment increasingly define real-world value.